🗒️ All notes

Unorganised fleeting thoughts...

2025・07・07

This blog post has gotten me thinking about why I keep my notes here. Though the original intention for this whole system was built around zettelkasten , my latest website design which uses these notes as a foundation has shifted to being just notes. A stream of consciousness.

I agree with the anxieties that come with a second brain, but I think there is still value in seeing my archive of thoughts. It grounds me to see where I came from and where I am now.

I’m keeping my notes for now.

2025・06・16

Another article about creating grainy backgrounds with SVGs. Obsessed with this effect

2025・06・15

Ive been bullish on AR for a few years now but the convergence of AI + AR to create XR is feeling more real. Watched Android XR: A New Reality Powering Headset and Glasses

- AI is to XR as touch screens were to smart phones

- Android XR is doubling down on snapdragon

- get ready for https://developer.android.com/xr

- web-xr could be a good starting point https://developer.android.com/develop/xr/develop-with-webxr

2025・06・12

A useful tool for testing open graph images for websites.

test og locally use this extension https://addons.mozilla.org/en-GB/firefox/addon/open-graph-preview-and-debug/

2025・06・11

I like the concept of neverthrow. interesting talk about - https://youtu.be/VcOIz7tOBoM?si=DdxZlZkEX48t4YJi

- try catch is hidden control flow and

erroris unknown so it takes discipline to check everyinstanceofand error could be, which is not scalable in larger teams. - neverthrow is useful when your service has multiple error states to handle

- catch should only be for truly exceptional circumstances which we cant control.

2025・06・05

Learning some cool things about Cloudflare workers as I try to preserve note.chiubaca.com as part of my blog rebuild.

I don’t need to maintain another astro project for my notes and my main blog AND have a sub-domain for my notes

note.chiubaca.com can be a worker that proxies a request from chiubaca.com/fleeting-notes. this is such a eureka moment for me.

It’s quite nuanced though as we also need to proxy all the CSS and JS that comes along with it. This is where I’ve learnt about Document <base> where all ur website relative urls can reference a different website.

To inject this tag we can use Cloudflare HTMLRewriter to inject this into the response which we proxied across, though, as we also need to proxy all the CSS and JS

2025・06・01

Creating a GitHub action with an on action of workflow_dispatch lets you create a webhook to run anything essentially.

for example, if I have a GitHub action called deploy.yml my GitHub repo called my-code I can execute this webhook with the following curl:

curl -X POST \

https://api.github.com/repos/my-code/your-repo/actions/workflows/deploy.yml/dispatches \

-H "Authorization: token YOUR_GITHUB_PAT" \

-H "Accept: application/vnd.github.v3+json" \

-H "Content-Type: application/json" \

-d '{"ref":"main"}'For some reason Cloudflare workers dont have deploy webhook like Cloudflare pages out the box, so this solution works as a pretty good alternative, heres the action that redeploys my website:

# deploy-chiubaca-com.yml

name: Redeploy chiubaca.com to Cloudflare worker

on:

workflow_dispatch:

jobs:

deploy:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- uses: pnpm/action-setup@v2

with:

version: 8

- name: Install dependencies

run: pnpm install

- name: Build chiubaca.com

run: pnpm -w run chiubaca.com:build

- name: Publish to Cloudflare

run: pnpm -w run chiubaca.com:deploy

env:

CLOUDFLARE_API_TOKEN: ${{ secrets.CLOUDFLARE_API_TOKEN }}Like in the above example, i can execute this action just by running:

curl -X POST \

https://api.github.com/repos/chiubaca/chiubaca-monorepo/actions/workflows/deploy-chiubaca-com.yml/dispatches \

-H "Authorization: token ${{secrets.GHUB_PAT_FOR_CHIUBACA_COM_REDEPLOY_ACTION}}" \

-H "Accept: application/vnd.github.v3+json" \

-H "Content-Type: application/json" \

-d '{"ref":"main"}'

2025・05・31

testing github action 12343321

2025・05・29

best favicon and icon generator i’ve used so far https://realfavicongenerator.net/

2025・05・14

Nextjs caching is quite complex, but its realtive well outlined here https://nextjs.org/docs/app/deep-dive/caching

key takeaways so far

- shared

fetchcalls which are used in different RSC have memoised response. therefore this fetch:

const dogResp = await fetch(`https://dog.ceo/api/breeds/image/random`);

const dogJson = await dogResp.json();would return the same response acrss all react server components that called it.

This is a react feature and not a next.js feature. Only GET requests get memoised. So GQL queries dont have this side effect as GQL queries are made via a POST request. The workaround is to wrap these api calls in a react.cache() .

export const dynamic = "force-dynamic";is what forces next.js to render the page as a dynamic page other it will statically render it.

There are four levels of caching to be aware of:

| Mechanism | What | Where | Purpose | Duration |

|---|---|---|---|---|

| Request Memoization | Return values of functions | Server | Re-use data in a React Component tree | Per-request lifecycle |

| Data Cache | Data | Server | Store data across user requests and deployments | Persistent (can be revalidated) |

| Full Route Cache | HTML and RSC payload | Server | Reduce rendering cost and improve performance | Persistent (can be revalidated) |

| Router Cache | RSC Payload | Client | Reduce server requests on navigation | User session or time-based |

2025・05・07

uv seems to be the modern way to manage python these days https://docs.astral.sh/uv/

great summary of what MCP is https://www.youtube.com/watch?v=7j_NE6Pjv-E

- LLMs are not useful by themselves

- connecting LLMs to tools make them more useful

- MCP is a standard protocol to link tools / services / APIs to LLMs

2025・05・05

move my whole notion workflow to obsidian? I need kanbans… https://github.com/mgmeyers/obsidian-kanban

2025・05・03

real cheap VPS https://www.netcup.com/en/server/arm-server

build AI agents with TypeScript (Mastra) https://www.youtube.com/live/pWnVTK-3V5U

2025・04・24

TIL: you can apply a generic to a React component e.g

type Step = "property-type" | "semi-or-detached" | "property-attributes" | "choose-similar"

<ProgressBar<Step>

currentStep={stepParam}

stepToProgressPercentage={stepsToProgressPercentage}

/>

2025・04・23

zod based cli builder https://github.com/tunnckoCore/zodest

2025・04・20

2025・04・19

Interesting tool to check for handle availability. Not super reliable, but interesting modular architecture. https://sherlockproject.xyz/

2025・04・18

Finally getting around to self-hosting Convex. Want to try this with Tanstack Start. Following the docs just works and runs on a sqlite, which will be interesting to see how far it scales…

- https://docs.convex.dev/self-hosting

- https://github.com/get-convex/convex-backend/blob/main/self-hosted/fly/README.md

better auth convex adapter looks useful

2025・04・13

the ux of a TUI but in the web https://github.com/webtui/webtui

2025・04・11

check the pending state of route navigation in nextjs app router with useLinkStatus. Use for creating loading states.

2025・04・07

Durable object not have a free tier! https://developers.cloudflare.com/changelog/2025-04-07-durable-objects-free-tier/

2025・04・05

https://animejs.com/ looks soo good. I want to play around with this lib when I get a chance. I’ve wanted to do a mapbox scrolly telling application for a long time now, this could a great way to do it.

2025・03・27

hard reset git to last commit

git reset --hard HEAD~

#same as

git reset -hard HEAD~1

then git push -f

“

2025・03・09

live in the past, you will be depressed

live in the future, you will be anxious

live in the present, you will be happy

2025・03・04

Watching Web without walls.

-

you can create your own custom feeds, e.g I could look up people posting about parenting / dads etc

-

bksy is using the

at://protocol -

you can host your own bluesky data

-

you can write custom events to atproto and build your own applications like this

-

bsky.app is an aggregator of atprotos

-

custom apps can be built on atproto

- meetup clone https://smokesignal.events/

- hnews clone

2025・03・03

someone made a TS codegen for pocketbase . It auto-generates zod schemas as you update your pocket base schema.

2025・03・01

I didn’t get fly.io for the longest time. It is probably because I have been spoiled by Vercel, netlify and the like. Writing a docker file has never been a thing I enjoyed, LLMs have made it easier. but Flyio forcing me to do it has been a great way to level up my skills.

Realising I have a full VM to work within in flying is the eureka moment. in my docker file, I can configure any Linux dependency. I can even set up SQLite next to my application and have it all run on the same instance! not sure if it’s recommended, but it works, and that’s cool.

2025・02・21

Articbase paired with Citra is rather awesome. It sort of turns a 3DS into a switch like system. My understanding is that it fowards the signals of 3ds process to a remote machine to actually do the processing! The remote machine is doing the heavy lifting in terms of rendering the game so if the machine is powerful it can upscale do or sorts resource intensive stuff. Really clever!

2025・02・11

Native support for web sockets looks interesting… I could rebuild big-two with this?

I also would like to try a durable object rewrite.

In the meantime. I should look into using useSyncExternalStore for my real-time frontend syncing.

2025・02・10

Got notifications implemented for big two which was rather annoying. Turns out there are two web notifications apis. There is the Notification which you need to use to check if a user has allowed permissions for a notifications on website. However on Android ( possibly IOS too?!) you don’t invoke local notifications with this API (even though you can!) . You have to ensure you have a service registered to your website and you have to invoke a notification using said service workers notification method 🤦. Its horrible ergonomics, but it seems to be working on Android devices… I only realised after reading this PSA that was buried away.

Main limitations of my current local notifications system is that when im in a different game, notifications from another game wont be triggered. I think the more robust method will be to implement push notifications which looks way more involved

2025・01・30

Great deep dive into React server components https://tigerabrodi.blog/why-is-react-server-components-actually-beneficial-full-history

- SSR is actually terrible, hydration is pure overhead

- RSC solve the problem of keeping static things pure HTML so you can render

interesting set of libs to make cool interactions and animations

2025・01・26

publishing my big-two game to the android play store has been a good learning experience .

-

include a manifest file to make it a barebones PWA

-

convert it to a TWA, with bubblewrap https://github.com/GoogleChromeLabs/bubblewrap/tree/main/packages/cli

- also follow this guide https://developers.google.com/codelabs

-

sign up, verify and pay $25 on the play console https://play.google.com/console/

-

remember to create a Digital Asset Links File, so the app can run in full screen

And I am now just discovering this tool https://www.pwabuilder.com/reportcard?site=https://big-two.fly.dev/

2025・01・23

Starting to get interested in self-hosting either with Coolify or maybe even with https://www.smallweb.run/docs/getting-started

2025・01・20

day 3 learning neovim. Made a lot of progress yesterday, cemented some useful vim motions for selecting blocks of text and navigating around., code actions, TS LSP cofigured , lazy git integration. Still lots that I want to get configured though.

- vim motions for changing quotes and adding surrounding brackets

- npm management

- biome lsp working correct

- astro lsp

- better workflow for splits

- configure telescope for searching across file. neo-tree is slow?

- tmux

ok figured out how mini.surround works now…

its import to type sr / sa fast to activate surround mode

a common work flow is to change classname to string literals in react

<div className="m-10"/>first run

sr"`which [s]urrounds and [r]eplaces " for a backtick quote.

<div className=`m-10`/>we now need to surround the backtick with braces to make it valid jsx with

saa`{which [s]urrounds and [a]dds curly braces

note: shift + k provide lsp hover effect, awesome for getting typescript details on a symbol!

so I’ve spent all day configuring my neovim setup today and feeling a bit burnt out from it. It feels like messing around with a linux distro and hitting issues after issue. When I open vscode it just feels comfortable and things just-work.

2025・01・19

day 2 learning neovim.

-

Continuing with https://www.youtube.com/watch?v=KYDG3AHgYEs. Customising keybinds is starting to make more sense. \

-

wasted a bunch of time configuring smooth scrolling. track pad scroll get janky when you enable something some mini.animate. Will need get used not using trackpad to scroll so much. will need to try and get used to ctrl+u and ctrl+d w

-

throwing my

init.luainto Claude is surprisingly handy! -

Also ChatGPT helped we get moving lives up and down working vscode style:

vim.keymap.set('n', '<C-j>', ':m .+1<CR>==', { noremap = true, silent = true }) -- Move line down

vim.keymap.set('n', '<C-k>', ':m .-2<CR>==', { noremap = true, silent = true }) -- Move line up

-- Move selected lines up and down in visual mode

vim.keymap.set('v', '<C-j>', ":m '>+1<CR>gv=gv", { noremap = true, silent = true }) -- Move selection down

vim.keymap.set('v', '<C-k>', ":m '<-2<CR>gv=gv", { noremap = true, silent = true }) -- Move selection up- my window slipping management is pretty bad right now. something to work on later…

2025・01・18

Taking a second pass to learn neovim again.

-

Currently working through this https://www.youtube.com/watch?v=KYDG3AHgYEs. It’s starting from a much more grassroots level and configuring a few custom command. e.g stop copying stuff i’m deleting, vim!!

-

Have worked through Vim

:Tutor(again?)

2025・01・05

Nerd sniped myself trying to drop pocketbase as my realtime db. A sort of simpler hacky alternative has been to use server sent events. I’ve gotten something working by combining hono.js , drizzle orm and sqlite. hono.js makes it really easy to create a sse endpoint, drizzle gives me TS QOL and sqllite lite will just make deploying things easier.

- within my insertion POST hono endpoint i emit an insert event along with the data payload

- within my sse endpoint, its listening to events in step one

- when an event is received, the sse endpoint will write to the SSE stream.

2025・01・01

Happy new year!

Already thinking about all the new things I want to learn and do for this year whilst juggling my new life as a dad…

Some things on my mind:

- Simplify my notes setup to make it even easier to jot notes and write more essays. I like this guide https://stephango.com/vault

- Finish off my big two game. building it has gotten me wanting to learn more about real time systems. I wanna go deeper into websockets and server sent events.

- building big two has made me want to explore building my own version of pocketbase with better types like convex. Hono.js looks like it could be core pillar to build this.

- I keep want to get better at vim and neovim. I want to build up my dev workflow with this.

2024・12・23

https://big-two-app.fly.dev/ all working on fly.io now. thinking about if I can drop pocketbase as arealtime backend entirely

Server-sent event seems like a perfect fit for this game as I’m only ever reading state. mutations happen via post requests, so I dont need websockets for bidirectional communication.

time for a re-build!!

2024・12・20

so much ai stuff from cloudflare

2024・12・07

2024・11・23

Cursor teaching me how to re-write my big-two game with xstate which is awesome. Its not so scary when applied to a real life context. the API sort of reminds of vue.js options API

export const createBigTwoGameMachine = createMachine(

{

id: "bigTwoGame",

initial: "WAITING_FOR_PLAYERS",

types: {

// Types for context, states, actions etc all go here

},

context: {

players: [],

currentPlayerIndex: 0,

roundMode: null,

cardPile: [],

consecutivePasses: 0,

winner: undefined,

},

states: {

// Define all possible state here and also what those state can transition to. we can also place guards and other things in thes states too. e.g

WAITING_FOR_PLAYERS: {

on: {

JOIN_GAME: {

actions: ["addPlayer"],

},

START_GAME: {

target: "GAME_STARTING",

guard: "hasEnoughPlayers",

},

},

}

},

},

{

guards: {

// small functions to guard against impossible states

hasEnoughPlayers: ({ context }) => context.players.length >= 2,

},

actions: {

// actions like dispatchers, the actual buisness logic to update the state context. this where mutations live.

addPlayer: ({

context,

event,

}: {

context: GameContext;

event: GameEvent;

}) => {

context.players.push({

id: event.playerId,

name: event.playerName,

hand: [],

});

},

},

}

);

…and it has also taught me the slightly new setup version of the this API too.

export const createBigTwoGameMachine = setup({

types: {

context: {} as GameContext,

events: {} as GameEvent,

},

actions: {

dealCards: ({ context }) => {

// Implementation here

},

playCards: ({ context, event }) => {

// Implementation here

},

setRoundMode: ({ context, event }) => {

// Implementation here

},

},

guards: {

hasMaxPlayers: ({ context }) => context.players.length !== 4,

},

}).createMachine({

id: "bigTwoGame",

initial: "WAITING_FOR_PLAYERS",

context: {

players: [],

currentPlayerIndex: 0,

roundMode: null,

cardPile: [],

consecutivePasses: 0,

winner: undefined,

},

states: {

WAITING_FOR_PLAYERS: {

on: {

JOIN_GAME: {

actions: ["addPlayer"],

guard: "hasMaxPlayers",

},

START_GAME: {

target: "GAME_STARTING",

},

},

},S

},

});

2024・11・15

- immer for updating deeply nested objecting in an immutable way is a game-changer pair with react and state-setting.

2024・11・04

- Good guide to self-host pocketbase . Was almost too easy… Whats the catch?

2024・11・03

- Did you hear? tech twitter is on bluesky now https://bsky.app/profile/patak.dev/post/3l7pyknij2z2y

2024・11・02

2024・10・31

import types into a definition file is not intutive! https://stackoverflow.com/a/51114250

2024・10・27

Exploring realtime options to build big-two

- https://developers.cloudflare.com/durable-objects/

- https://supabase.com/docs/guides/realtime

- https://docs.convex.dev/realtime

- https://www.mongodb.com/developer/products/mongodb/real-time-data-javascript/

- https://pocketbase.io/docs/api-realtime/

Leaning towards convex due to familiarity, but pocketbase for simplicity and portabability looks very compelling.

2024・10・26

JS clis with clack

2024・10・19

- encrypt data with base 64 encoded urls https://64.surf/

2024・10・07

- React one is a new framework to keep an eye on. Tight integration with https://onestack.dev/docs/data , replicache could make this very interesting for local-first and real-time applications. Maybe a good contender for my big-two game.

2024・10・06

- 10 days of trying to code with nvim and still finding it a bit clunky. Some commands feel good like

gdandci{(and othercivariant) . Just not fully using navigating a file with justhjkl, mouse scroll still feels superior and smoother? Will keep at it for rest of this month.

2024・09・25

started learning neovim

- https://vimforreactdevs.com/ , good starter uses kick start

- https://www.youtube.com/watch?v=V070Zmvx9AM - specifc for web dev not just React, recomends lazy vim which overall feels better to me

2024・09・18

- Free alternative to https://crocotile3d.com/ , https://jeiel.itch.io/sprytile

- https://www.youtube.com/watch?v=-GWvYamwKRI S

2024・09・11

- make 3d models but also using 2s tile maps for that retro look! https://crocotile3d.com/

2024・09・07

free game assets:

2024・09・06

- sprite sheets with ChatGPT https://x.com/i/bookmarks?post_id=1712674661706977360 https://www.youtube.com/watch?v=dR8JMsYtq9w&t=81s

2024・08・21

Learnt about the Event Bus pattern a.k.a global signals.

Instead of letting child nodes handle their own signals, we centralise them at the highest point in a Godot game state,[ an autoloaded singleton. This creates a singleton (class) available to all nodes at runtime. If we set up signals in this class, we can invoke this signal in any node simply by referring to it any other node e.g. GlobalSignal.hit.emit(). This also means that any other node can subscribe to the events of this Global signal like its broadcasting from the highest level. This pattern has been useful when constructing a HUD for a game. When my enemies die I need to emit how many points that were to show it on the UI, the HUD.

GLobalSignal

signal: points

/ \

/ HUD

/ - GlobalSignal.points.connect(update_score)

Enemy

- GLobalSignal.emit.points()2024・08・11

2024・08・10

rough notes from playing around with Godot so far:

- It is a half low-code, full-code game SDK that supports both 2D and 3D

- The game logic is mostly written with GDScript, a Python-like language with support for types

- It feels intentional which part of the software is meant to be used with a GUI e.g creating enemy paths and setting up 3D cameras.

- Everything is a

Node Nodescan be organised intoScenesScenescan also be composed into otherScenesNodescommunicate to with other nodes withsignalswhich send messages and/or arguments too.

2024・08・08

Quickly make a clone of your repo to a separate directory with git worktree

git worktree add ../copied-repo

2024・07・22

2024・07・19

Astro.js just keeps getting better. Server islands is out performing next.js partial pre-rendering https://x.com/FredKSchott/status/1813995537089409268

2024・07・15

handing streams from Open AI is tricky, few resources which look useful

2024・06・30

Making a custom agen with OpenAI is fun. you can create a bot with a particular purpose quite quickly with just a pre-prompt. its a multi-part process though,

- create an assisstant - This can be reused

- create a thread that uses an assistant

- continue the thread by references the thread id that was generated

open ai keeps threads for a little while, but its proabally not a good persistant storage solution… So whats the solution to storing long threads? chuck it all in a DB?

https://community.openai.com/t/questions-about-assistant-threads/485239/3

2024・06・15

node js has a lot of out-the-box functionality these days.

- parseArgs for CLI args parsing

- —env-file= cli flag to import any env files in the INI format

- fetch is built in

https://evertpot.com/node-changelog-cli-tool/ https://nodejs.org/api/util.html#parseargs-tokens https://nodejs.org/dist/latest-v20.x/docs/api/cli.html#—env-fileconfig https://nodejs.org/docs/v20.14.0/api/globals.html#fetch

2024・06・08

I launched shibes.lol and its been a success, amazing to see my ShibaEveryHour audience participate and add their shiba photos submissions. Its been so much fun.

This project validate my fullstack cloudflare astro starter:

- Astro.js web fullstack web framework

- Cloudflare d1 - storage

- Cloudflare r2 - images / objects

- Lucia - authentication

2024・06・02

turbo development on shibes.lol . The focus, build a tool to submit shiba photos as quickly as possible. I still keep getting distracted by building non-important things, like basic auth and silly css 🤦.

2024・05・31

- VITAMINS & MINERALS

- Zinc, daily 10-15mg (zinc to be balanced with Cooper, in ratio of 10:1 because, more Zinc will reduce Copper in your blood)

- Daily Sunlight c. Vit D/C d. Biotin 30 mcg (only if deficient)

-

Male Patent Boldness PREVENTION a. Saw Palmetto 320 mg daily on a full stomach b. Finestride 0.25mg 4 times week min (can have erectile dysf so take Rosemary Oil or Castor Oil). Use the spray version of Fin.

-

REGROW a. Scalp Massage, 5 mins min b. Minoxidil, apply daily min 1-2 times c. Microneedling / Derma Pen 1mm (press in, don’t roll), 1-2 times a week d. Gelatine supplementation (make hair thicker). Gelatin is made from animal collagen such as bones, skin, tendons and ligaments.

-

AVOID a. Processed foods, smoking & alcohol b. Dehydration, stress, poor sleep, showering with Fluoride

Note;

- Take Biotin if Deficiency, Minoxidil doesn’t prevent hair loss, Finastride is a must but has side effects. They come in sprays and oils so use those topical ones.

- Try natural ways, avoid all oral medication and try topical ways as much as possible.

- There are two DHT pathways in our body. Saw Palmetto can only block one pathway and apparently, Finestride blocks the other. But try only Saw Palmetto first and see before you can try the spray version of Finestride because, it seems unavoidable.

https://youtu.be/i7MLUy0Yac4?si=J0gck9vmXVhtheI4

- Lysine (via grass fed beef, eggs) -make sure you’re getting enough protein)

- Iron

- Probiotics - having a healthy gut

- 4)DHT inhibitors like ZINC, pumpkin seed oil, green tea extract, nettle root

- Lower stress

- 6)B vitamins https://youtu.be/9yr7A1fOuKM?si=KtNQO8Ohx-wRg_ya

2024・05・30

- Cant use Docker Desktop for work, but Rancher desktopis just as good, maybe better!?

2024・05・29

Pairing Cloudflare transform zones with r2 image and unpic means i can transform images for free and have them optimised on the client with almost zero effort!

React 19 notes:

2024・05・27

Thinking of more full-stack Cloudflare teething issues. Turns out its really hard to connect to d1 remotely? but how does drizzle do this with their d1 client I wonder? .

https://zzai.tech/blog/connect-d1-database-remotely-from-local-svelte-development

displaying r2 image objects as urls the hard way:

const APP_BUCKET = Astro.locals.runtime.env.APP_BUCKET;

const image = await APP_BUCKET.get("local-image", {});

const imageBuffer = await image?.arrayBuffer();

const imageBase64 = btoa(String.fromCharCode(...new Uint8Array(imageBuffer)));

const imageUrl = `data:image/png;base64,${imageBase64}`;Display images from r2 the easy way.

- Makes your r2 bucket public and get a

r2.devdomain - https://developers.cloudflare.com/r2/buckets/public-buckets/#managed-public-buckets-through-r2dev - get images by supplying the key in the subpath of your r2.dev URL e.g

https://pub-example-bucket.r2.dev/${r2-image-key}

note: link your custom domain is the preferred method when going to prod as you can use existing Cloudflare caching - https://developers.cloudflare.com/r2/buckets/public-buckets/#custom-domains

transform images with a Cloudflare images and their URL API: https://developers.cloudflare.com/images/transform-images/transform-via-url/

note: you must have a domain to be able to do this, once enable it exposes a sub path cdn-cgi on your domain a.k.a zone e.g

https://chiubaca.com/cdn-cgi/image/width=80,quality=75/https://assets.chiubaca.com/PxcxZE6aGI_kLOEM6gq_400x400.jpg

2024・05・26

-

more full stack cloudlfare with Astro. now moving into R2 which I wanted to originally test out for image assets . uploading images is straight forward enough, but reading the object again as images is proving to be tricky as we’re working with R2 objects which might need parsing, converting.. etc..

is https://www.cloudflare.com/en-gb/developer-platform/cloudflare-images/, a better fit for this? --- seems using the aws s3 client to interface with R2 is the way forward? -https://docs.aws.amazon.com/AWSJavaScriptSDK/v3/latest/client/s3/

2024・05・19

Working on fullstack Astro Cloudflare starter. Trying to integrate Lucia auth with Drizzle and Cloudflare d1. Lucia docs are barebones and imprecise. Luckily this video helped a lot! https://www.youtube.com/watch?v=urAHMyBXM6kÏÍ

Discovering lots of weird quirks with Cloudflare Pages. The hardest thing to reason about is discrepancies between local and dev and production

The main thing to remember is local dev is not a Cloudflare runtime so we need to be conscious of library choices. This is not a bad thing really…

Environment variables are weird:

-

Pushing a local codebase to cloudflare pages, it absorbs the the envs you have specified in your

.env. -

Using a GitHub branch deployment, environment variables specified can’t be access via Astros

import.meta.env. Instead they appear to be injected into Cloudflare runtime and can be accessed into theAstro.locals.runtime. this object is available on astro file and middleware. https://docs.astro.build/en/guides/integrations-guide/cloudflare/#cloudflare-runtime- to use development secrests use a

.dev.varsfile.

- to use development secrests use a

2024・05・14

2024・05・13

tsx lets you run any typescript file as easily as

npx tsx ./script.tshttps://tsx.is/getting-started

With the absolute failure of delete-fullstack-cloudflare. I’ve been exploring Astro.js as a full-stack web dev solution. Especially with the announcement of Astro Actions, it feels like Astro can stand its ground against the likes of Next.js.

New goal, get all these features working together in Astro:

- Cloudflare D1 for storage

- Cloudflare R2 for images

- Auth (Lucia/ Auth.js)

2024・05・09

Writing up notes on delete-fullstack-cloudflare . I want to build on Cloudflare pages with next js so much, but there are just too many gotchas and issues i’m running into. logged the following issues on the next-on-pages repo:

Next to explore, Next.js with SST ? or Astro Actions ?? with cloudflare adapter?

2024・05・03

Shiki is the code, highlighter built into Astro,

One challenge i’ve had a for a long time is code block line highlighting, but Shiki paired with [transformerNotationHighlight](@shikijs/transformers | Shiki) it should be possible!

2024・04・21

-

A few problems porting an existing next.js to cloudflare pages, works locally, but getting a cryptic error along the lines of :

Application error: a server-side exception has occurred (see the server logs for more information).Digest: 2354650810Cloudflare logs reveal the following error:

"Error: The 'credentials' field on 'RequestInitializerDict' is not implemented.

lots people seeing this error pop in various forms, one thread thats seem related… https://github.com/cloudflare/workers-sdk/issues/2514

2024・04・20

-

Big reason to move off Vercel to Cloudflare Page. free tier can be used for commercial use: https://community.cloudflare.com/t/cloudflare-pages-for-commercial-use/306890/2

-

nice guide to work with nextjs and cloudflare pages https://logsnag.com/blog/deploying-nextjs-13-app-dir-to-cloudflare-pages

2024・04・15

Thank you Netlify. You’ve been amazing over the years. But Cloudflare pricing, reliability and general future outlook can’t be denied. The migration of my projects to Cloudflare has begun. notes.chiubaca.com and chiubaca.com have been ported over seamlessly.

2024・04・08

Thinking about calmness and this blog

I love the idea of a calm company

2024・04・07

So many great announcements from cloudflare https://blog.cloudflare.com/pages-workers-integrations-monorepos-nextjs-wrangler#improved-nextjs-support-for-pages

- Mono repo support, this will make moving chiubaca-mono to cloudflare way more straightforward!

- Next.js support on Pages! need to evaluate this over SST but there are two promising ways to move off Vercel

2024・04・02

- vs code customLabels for tabs coming soon. useful in next.js now every file is called page.tsx https://www.linkedin.com/posts/wesbos_i-am-so-happy-this-is-being-implemented-ugcPost-7176578095288647680-_KWX?utm_source=share&utm_medium=member_desktop

2024・03・23

Keep thinking about this Netlify horror story.

Considering :

2024・03・24

high level steps for getting a mockup via their rest API

Get all products: https://api.printful.com/products e.g we’re interested in stickers:

{

"id": 505,

"main_category_id": 202,

"type": "STICKER",

"description": "These glossy stickers are designed just for you. Give your favorite items, like a notepad, laptop, or phone case an extra glow and personal touch. \n\n• Film type: MPI 3000 Gloss HOP (EU), Promotional Monomeric PVC (US)\n• 0.12″ (0.3 cm) white sticker border \n• Glossy finish\n• Fast and easy application\n• 2–3 year durability\n• Indoor use\n• Blank product sourced from Japan\n\nDon't forget to clean the surface before applying the stickers.",

"type_name": "Kiss Cut Sticker Sheet",

}Use id505 to make the next request:

https://api.printful.com/products/505

This returns full Printful stock, variants and other useful details about this product. Most importantly keep notes of the variant IDs, eg "id": 12917.

Use the product id again to get printfiles for this product: https://api.printful.com/mockup-generator/printfiles/505

e.g response:

{

"code": 200,

"result": {

"product_id": 505,

"available_placements": {

"default": "Print file",

"background": "Background"

},

"printfiles": [

{

"printfile_id": 256,

"width": 1750,

"height": 2482,

"dpi": 300,

"fill_mode": "fit",

"can_rotate": false

},

{

"printfile_id": 259,

"width": 1822,

"height": 2554,

"dpi": 300,

"fill_mode": "cover",

"can_rotate": false

}

],

"variant_printfiles": [

{

"variant_id": 12917,

"placements": {

"default": 256,

"background": 259

}

}

],

"option_groups": [

"Flat",

"Holiday season",

"Lifestyle",

"Lifestyle 2",

"Lifestyle 3",

"Lifestyle 4",

"Spring/summer vibes",

"Valentine's Day",

"template"

],

"options": [

"Background",

"Christmas",

"Christmas 2",

"Front",

"Spring/Summer",

"Spring/Summer 2",

"Valentine's Day",

"Valentine's Day 2"

]

},

"extra": []

}we’re now ready to form a mockup generator request: https://api.printful.com/mockup-generator/create-task/505

This is a POST request which need a body with a payload like:

{

"variant_ids": [

12917,

],

"format": "jpg",

"files": [

{

"placement": "default",

"image_url": "https://clever-stork-292.convex.cloud/api/storage/9fdcfdeb-ee06-47a2-83ff-01184c939d0d",

"position": {

"area_width": 1800,

"area_height": 1800,

"width": 1800,

"height": 1800,

"top": 0,

"left": 0

}

},

]

}this will return a task that can be polled at an interval:

{

"code": 200,

"result": {

"task_key": "gt-645099290",

"status": "pending"

},

"extra": []

}https://api.printful.com/mockup-generator/task?task_key= + {task_key}

TODO:

figure out out to automate placing an order:

https://api.printful.com/orders POST w/ works:

{

"external_id": "sticker-2-4235234213",

"shipping": "STANDARD",

"recipient": {

"name": "John Smith",

"company": "John Smith Inc",

"address1": "19749 Dearborn St",

"address2": "string",

"city": "Chatsworth",

"state_code": "CA",

"state_name": "California",

"country_code": "US",

"country_name": "United States",

"zip": "91311",

"phone": "string",

"email": "alexchiu11@gmail.com",

"tax_number": "123.456.789-10"

},

"items": [

{

"id": 1,

"external_id": "sticker-item-2",

"variant_id": 1,

"quantity": 1,

"price": "13.00",

"retail_price": "13.00",

"name": "Kiss Cut Sticker Sheet",

"product": {

"variant_id": 12917,

"product_id": 505,

"image": "https://printful-upload.s3-accelerate.amazonaws.com/tmp/71dbbab32afaf7a761f7a28b91ac9268/kiss-cut-sticker-sheet-white-front-6600501f5d2c0.png",

"name": "Kiss Cut Sticker Sheet"

},

"files": [

{

"type": "default",

"url": "https://printful-upload.s3-accelerate.amazonaws.com/tmp/71dbbab32afaf7a761f7a28b91ac9268/kiss-cut-sticker-sheet-white-front-6600501f5d2c0.png",

"options": [

{

"id": "template_type",

"value": "native"

}

],

"filename": "shirt1.png",

"visible": true,

"position": {

"area_width": 18,

"area_height": 2400,

"width": 1800,

"height": 1800,

"top": 300,

"left": 0,

"limit_to_print_area": true

}

}

],

"options": [

{

"id": "OptionKey",

"value": "OptionValue"

}

],

"sku": null,

"discontinued": true,

"out_of_stock": true

}

],

"retail_costs": {

"currency": "USD",

"subtotal": "10.00",

"discount": "0.00",

"shipping": "5.00",

"tax": "0.00"

},

"gift": {

"subject": "To John",

"message": "Have a nice day"

},

"packing_slip": {

"email": "alexchiu11@gmail.com",

"phone": "+371 28888888",

"message": "Message on packing slip",

"logo_url": "http://www.your-domain.com/packing-logo.png",

"store_name": "Your store name",

"custom_order_id": "kkk2344lm"

}

}2024・03・21

Being going deep into Stripe, Stripe.js and Stripe elements. The killer feature of Stripe appears to be Stripe elements to let you embedded checkout element anywhere on your website to build custom checkout flows. LemonSqueezy on the other hand only seems to have the Hosted and Overlay flows. Once they have a similar product to elements it could be a real Stripe killer.

2024・03・17

Stripe docs are great, especially how when you scroll and parts of the code gets highlighted. Stripe uses https://markdoc.dev/ behind the scenes. Something similar could be created with https://expressive-code.com .

2024・03・15

AbortController is a web standard for aborting requests via a signal.

This is quite important in React 18 due to the double firing of useEffects in dev mode. We probably want to cancel redundant request when components mount and re-mount.

useEffect(() => {

// Setup our abortController

const abortController = new AbortController();

const getAsyncData = async () => {

try {

const res = await fetch(`/api/some-data?query=${queryParam}`, {

method: 'POST',

signal: abortController.signal,

});

const json = await res.json();

const clientSecret = json.clientSecret;

setStripeState({ state: 'success', clientSecret });

} catch (e) {

if (!abortController.signal.aborted) {

setStripeState({

state: 'error',

message: 'Unknown error',

});

}

}

};

// if the component unmounts or he queryParam changes we can cancel the fetch request like this:

return () => {

abortController.abort();

};

}, [queryParam]);2024・03・11

- Third week into the new job. Some new tech to get my head around.

2024・02・27

https://volta.sh/ is a better nvm alternative. it detects the node version in your project if engines has been configured in your package.json .

"engines": {

"node": "18.x"

},2024・02・25

excited about ElectricSQL - Sync for modern apps (electric-sql.com)

2024・02・24

grep.app | code search search for coding examples in github repos.

Integrated the same demo from SDXL Lightning - by fal.ai (fastsdxl.ai) into sticker-ai project and it’s amazing?! real time image generation is a game changer in terms of UX for projects that are generating images from text.

2024・02・23

- real time image generation by FalAI incredible SDXL Lightning - by fal.ai (fastsdxl.ai) . Possibly need to pivot to using this over open AI because it is just SOO much faster!

- Docs on how to integrate with Vercel/Next.js Nextjs - fal docs

- Example next app router demo -fal-js/apps/demo-nextjs-app-router at main · fal-ai/fal-js (github.com)

- Looks like the live demo site is built using next js too and all open source : fal-ai/sdxl-lightning-demo-app: A demo application using fal.realtime and the lightning fast SDXL API provided by fal (github.com)

excellent directory of tailwind animations animation.ibelick - Tailwind CSS animation gallery

2024・02・22

- progress with sticker-ai, payments linked to lemonsqeezy, convex linked to open-ai. ui all linked up to convex. auth linked up to clerk. This is the fastest i’ve every built!

2024・02・20

Using convex to build sticker-ai and it’s a lot of fun! Getting into the weeds of how convex works is interesting. Its patterns and principles makes it a delight to work with. Things I like about it so far:

- all convex logic lives in

/convex- logic is clearly separated into queries, mutations, actions

- everything is strongly typed

- the auto generated api is awesome

things that are confusing:

- convex actions sort of make React Server Actions redundant

- Are you even supposed to run convex actions in React actions? context object seems to get lost.

- i guess working offline is out the question?

2024・02・17

Reading resources on openai.com, so much good stuff:

- Prompt engineering - OpenAI API

- OpenAI Cookbook

- What’s new with DALL·E-3? | OpenAI Cookbook

( shit lol, this was my whole idea)

- What’s new with DALL·E-3? | OpenAI Cookbook

convert bitmap to svg -Potrace (sourceforge.net)

2024・02・16

- Turn your Postgres into MongoDB FerretDB

-

Replicate.com is such an amazing platform to using and training AI models. So exciting exploring around and tinkering with fine tunings and existing models. Questions I have though..

- When to fine tune?

- When to use the model as is?

- How to adapt an existing model to produce consistent outputs

-

Open AI also has the ability to fine tune…

2024・02・14

GPT4All from nomic ai lets your run models locally hassle free

got access to Fly GPUs · Fly , this makes serverless AI possible.

2024・02・12

2024・02・09

Researching more into contracting life and what it entails.

Points from Guide to software engineering contracting in UK (codedeepdives.com)

- easy demand anywhere between 400 - 500 pounds a day

- work can range between 6 weeks to 6 months

- the feeling of job security is subjective

- set up a IR35 i.e a ltd company!

- get an accountant!

- getting a mortgage becomes more complex but there are specialist brokers out there for this

- get a business bank account! e.g (www.tide.co) or Starline. Monzo also looks good.

Other things i dont understand yet.

- best way to define work with a employer

- handling timelines

Notes from GPT-4

Certainly! As a software engineer contractor, organizing your work effectively with clients is crucial for successful project delivery. Here are some essential tips to help you stay on track and maintain a positive client relationship:

-

Clear Communication:

- Establish open and transparent communication channels with your client. Regularly update them on progress, challenges, and any changes.

- Use tools like project management software to track tasks, deadlines, and milestones. This ensures everyone is on the same page.

-

Detailed Requirements Gathering:

- Invest time in understanding the client’s requirements thoroughly. Ask questions, clarify doubts, and document everything.

- Create a detailed scope document that outlines project goals, features, and deliverables.

-

Agree on Milestones and Deadlines:

- Break down the project into smaller milestones. Define clear deadlines for each milestone.

- Regularly review progress and adjust timelines if needed.

-

Contract and Legal Aspects:

- Have a well-defined contract that covers terms, payment schedules, intellectual property rights, and confidentiality.

- Include provisions for change requests and scope adjustments.

-

Regular Work Reports:

- Provide work reports at agreed intervals (e.g., weekly or biweekly). These reports should detail completed tasks, upcoming work, and any issues encountered.

- Allow the client time to review and approve or provide feedback on the work1.

-

Risk Management:

- Identify potential risks early on. Discuss them with the client and propose mitigation strategies.

- Be prepared for unexpected challenges and have contingency plans in place.

-

Quality Assurance and Testing:

- Ensure thorough testing of your code. Deliver high-quality work that meets the client’s expectations.

- Involve the client in user acceptance testing (UAT) to validate functionality.

-

Documentation:

- Maintain clear and concise documentation. This includes code comments, user manuals, and technical guides.

- Document any design decisions, assumptions, and rationale.

-

Feedback and Iteration:

- Encourage client feedback throughout the process. Be open to making necessary adjustments.

- Iterate based on feedback to improve the final product.

-

Professionalism and Boundaries:

- Set professional boundaries. Clearly define working hours, response times, and availability.

- Maintain a positive and respectful attitude even during challenging situations.

Remember, every client is unique, so adapt these principles to suit the specific project and client dynamics. Building strong client relationships will lead to repeat business and referrals in the long run! 🌟

Learn more

1arc.dev2

teamwork.com3

bamboogroup.eu4

geeksforgeeks.org5

careerkarma.com6

computer.org7

en.wikipedia.org8

ryrob.com9

fsb.org.uk10thedevbazaar.com11zeroin.me12indeed.com+10 more

Paperwork templates: Free Contract Templates and Agreements (Word or PDF) | Signaturely

2024・02・05

- Some time to work on some OSS contributions for counterscale.

2024・02・02

2024・02・01

Mahonia aquifolium - can supress inflammation and slow down growth of skin cells

Indigo naturalis - also known as qing dai, is a traditional Chinese herbal medicine

Aloe vera - antibacterial and anti-inflammatory properties. They may help soothe the skin and fight bacteria that could cause infections.

2024・01・31

height: 100vh is not that great becasue content will get chopped off by mobile phone bottoms bars. I’ve got around this this with:

html,

body {

margin: 0;

padding: 0;

height: 100%;

width: 100%;

}

However 100svh should also solve this problem too

2024・01・30

It’s amazing to see best tooling and libraries transcend into standard web APIs. In musical OSM I have been able to drop Sass for vanilla css with zero code changes

2024・01・29

-

turn this into a JS game Figen on X: “Physics game. https://t.co/HmmvYGolsP” / X (twitter.com)

2024・01・28

- Revived Musical OSM by proxing requests from planet-osm via netlify edge function and works beautifully again. Also too the op to simplify the code massively .

2024・01・22

possibly the best drawer component i’ve seen…

2024・01・19

A GPT trained on geoscience code papers. Could it helper write geospatial queries?GS

2024・01・18

Looking into installing Stable Diffusion locally and steps seem to have gotten easier two main options right now that offer a nice GUI over SD is :

Comfy UI looks more powerful but AUTOMATIC1111 seems to be an easier install. Trying the latter for now.

2024・01・15

Not used Netlify functions in a while and it’s improved a lot! Including first class support for TypeScript.

I was able to build a proxy server to append additional headers to a image response very quickly. ArrayBuffers and Blobs still confuse me quite a bit in Node.js land.

2024・01・14

- Kind of shame there is no auth-js example for Astro yet, but looks like it’s planned)

- css challenge. would like to try and building an icon to scroll to top .

- it should only appear when your scrolling up

- it should get gett bigger but stop getting bigger after it gets to certain size

- no js

2024・01・10

we fall down

we learn

we stand up

2024・01・08

syntax-tree/unist-util-visit: utility to visit nodes (github.com) is a handy utility to recursively walk a syntax tree.

2024・01・07

Learning more about Git Submodules) . Its meant to be used to link git repo together if they’re dependencies of each other.

Add a submodule to an existing git repo is as straight forward as this command

git submodule add https://github.com/chaconinc/DbConnectorThis seems perfect for chiubaca-monorepo where I need to link it to my notes repo. The last piece of the puzzle is if I can get a sync working between that repo and my astro codebase. I have not been successful with symbolic links on windows.

Astro.js has a built in mechnism to manipulate markdown via rehype plugins. These are functions that manipulate markdown AST which makes it straightforward to build your own ones. I’m doing this in chiubaca-mono to convert my obsidian image and markdown reference so paths are corrected when published to my Astro websites.

2024・01・03

Building UIs with nested segments may not need to be built using recursion.

2024・01・02

Reading up on how webmentions work. I didn’t realise it is a w3c spec. Getting started with Webmentions ♂️ [2022 Tutorial] (daily-dev-tips.com)

Reading Perfectionism and Procrastination: How They’re Connected and What to Do About It – Solving Procrastination reminded me of 2023-10-20.

2024・01・01

Happy new year!

Feeling optimistic that this is the year one of side-projects can turn into a real side-hustle.

2023・12・31

v2 of chiubaca.com and notes.chiubaca.com is live all styled up! Still a few minor issues and features i’d like to get in place but its 90% there. I’m ready to write more in 2024!

- some sort of commenting system

- web mentions

- rss feed

- links working in in the breadcrumb nav

- improve code reuse

2023・12・30

-

Very happy with the work I’ve done in consolidating a bunch of code for my websites on

*chiubaca.cominto a single nx mono-repo at https://github.com/chiubaca/chiubaca-monorepo . My favourite aspect is that both notes.chiubaca.com and chiubaca.com are driven 100% my notes in this obsidian vault. This is made possible because both obsidian and Astro.js treat yaml frontmatter as first class citizens within their products so the I can do almost anything I want in terms of data manipulation as long as I have consistent frontmatter across all my notes. A big write up of this is needed

-

Configure Cloudflare DNS to Work with Netlify (stevepolito.design)

2023・12・29

GitHub fine-grained personal access tokens play nicely with Tokens(classic) which is nice. So no code refactoring required if you’re using https://github.com/octokit/octokit.js.

Invoke GitHub actions manfully with workflow_dispatch

name: Create Empty Daily Note

on:

# run workflow every morning at 6AM

schedule:

- cron: "0 6 * * *"

# run this workflow manually from the github Actions tab

workflow_dispatch:2023・12・28

- Big shift on unifying

*.chiubaca.comsites over to [chiubaca/chiubaca-monorepo (github.com)](https://github.com/chiubaca/chiubaca-monorepo. Solidifying a lot of my knowledge around setting up NX , Astro and general web tooling. Need to write a big write up how how I’ve totally over engineered this whole setup.

2023・12・21

- Loading GLTFs in Mapbox w/ three.js is pretty dang performant! might not be necessary to use these solution like Cesium and Esri i3s. Need to improve my understanding of GLTFs in the new year.

2023・12・03

Lets get back to journaling! starting a new job has naturally been crazy! Things I want to write about:

- coming up with business ideas

- separating my obsession with tools with solving real life problems

- my experience working at different sized companies

2023・11・17

2023・12・15

Been working with QGIS more and more at work and it continues to delight. The model designer is as powerful as ArcGIS model builder. Managed to automate a raster processing pipeline that converts to pixels to geojson points all through the model designer without needing to read any documentation.

2023・11・16

Control all monitors brightness on windows - emoacht/Monitorian: A Windows desktop tool to adjust the brightness of multiple monitors with ease (github.com)

2023・11・12

-

Its been fun revisiting A-frame after all these years, funny how i was able to hack away at a mapping demo so early on in my coding journey and now i’m being paid to explore to a real life AR mapping use case.

-

AR.js Location Based has been very fun to work with. It’s now a rabbit hole that will make me want to explore Aframe and three.js deeper…

2023・11・02

-

One major benefit of Maplibre over Mapbox is that we can add [custom terrain layers sources](Custom MapLibre Terrain Tiles | bert (wordpress.com))

- Main requirement is that the tile needs to converted into mapbox RGS tiles which in the an

x/y/zfile structure. [mbutil](mapbox/mbutil: Importer and Exporter of MBTiles (github.com)) can help with this.

- Main requirement is that the tile needs to converted into mapbox RGS tiles which in the an

-

[But what if you had to create a raster data set from height points?](Interpolating Point Data — QGIS Tutorials and Tips)

2023・10・30

-

[console logs viewer](markknol/console-log-viewer: Displays logs and Javascript errors in an overlay on top of your site. Useful for mobile webdevelopment. Enabled in 5 seconds (github.com)) perfect for debugging in mobile environments

-

client side background remover that uses wasm - imgly/background-removal-js: Remove backgrounds from images directly in the browser environment with ease and no additional costs or privacy concerns. Explore an interactive demo. (github.com)

2023・10・27

shaders and webgl stuff

2023・10・22

- no entry yet

2023・10・20

The upside of imposter syndrome

“In those moments where you feel like an imposter… you realise ‘I have something to prove’, so you’re not complacent,”

- Imposters have perfectionist tendencies, harbouring a secret need to be the very best at what they do. When they are unable to fulfil their perfectionist goals, “imposters often feel overwhelmed, disappointed, and overgeneralise themselves as failures”.

2023・10・18

2023・10・16

-

How to solve cors issue in geoserver || GeoServer || GeoDev - YouTube

-

Navigate to your geoserver installation e.g

C:\Program Files\GeoServerand look for theweb.xmlfile under\webapps\geoserver\WEB-INF -

uncomment the the

filtersettings . There is a comment<!-- Uncomment following filter to enable CORS in Jetty. Do not forget the second config block further down. --> -

no need to comment out the cors setting regarding apache Tomcat

-

there is one more block which has a comment

<!-- Uncomment following filter to enable CORS -->. Enable the code below:

-

2023・10・10

- Once WSL is installed , you can run wsl/unix commands in windows cmd by prefixing your commands with

wsle.gwsl ls.

Vector tile questions:

- pbf vs mvt vs pbtiles

- https://github.com/mapbox/vector-tile-spec/issues/69

- https://gis.stackexchange.com/questions/244341/difference-of-mapzen-vector-tiles-mvt-to-pbf-extracted-from-mbtiles

Overview of Vector Tiles - YouTube

2023・10・09

2023・10・08

Mistakes I won’t make again:

-

Ignoring bugs that I can’t reproduce consistently, they’re the most important to fix.

-

Being so burnt out that fail to tell others I’m burnt out.

-

Not asking for support when I need it.

-

Not pushing for planning when I know we really need it

-

Pushing ahead with no real plan.

2023・10・03

-

open source notion like text editor - https://www.blocknotejs.org

-

full stack typescript alternative to firebase - https://www.convex.dev/

Typescript projects like ts-sql blows my mind.

2023・10・02

Looking at more modern web mapping tech choices

2023・09・27

- Working with Playwright a lot to crawl a website for data and its very fiddly but I’m making progress. The high level workflow that seems to be working is to use the Locators API to narrow down the block of elements i’m interested then using

$()(get one element handle) or$$()(multiple handles) to parse the block and extract data from a DOM element.

2023・09・21

- Download Overture parquet files here - https://github.com/OvertureMaps/data

2023・09・18

- Continuing to scout the current GIS landscape for current trends

- GeoParquet - a new format for storing geospatial data

- Overture Maps - a redistribution of OSM that enhances geospatial data. A new inituative by Amazon, Meta , Microsoft and TomTom . It outputs to geoparquet

- DuckDB - file based high performance DB which can help process geoparquet to CSV or any other format

- Protomaps/ PMTiles - a new way to host vector tiles efficiently

2023・09・12

Jason Fried on company culture:

Culture simply happens. It’s emergent behavior. There’s nothing to do, it just is.

A company’s culture is a 50-day moving average. It’s what you’ve been collectively doing as a company over the last 50 days.

2023・09・10

Agency life has been tough. No doubt about it. Reflecting on whats been good and whats been bad

The good:

- Forced to learn quickly and you will level up in a short amount of time

- Learn new tech (at least in my company)

- Lots of projects so it’s hard to get bored

The bad:

- No time to learn tech deeply

- Ship fast and test nothing

- Deadline pressures vary from project to project. But when its on, it’s on!

- Lots of projects, but you end up just implementing the same thing over and over and over and over again…

2023・08・26

- Gotta get up to speed with GIS tech stacks again. Seems Geoserver is still the defacto way to publish geospatial services to the web https://docs.geoserver.org/stable/en/user/gettingstarted/postgis-quickstart/index.html .

2023・08・20

Great to be getting excited about Geospatial things again. Starting to think about what a modern GIS stack looks like? When I last looked it was PostGIS , GeoServer , Leaflet / Mapbox. New things I want to explore. Vector tiles / ProtoMaps, DeckGL .

2023・07・04

2023・06・20

- revisiting GQL and TS codegen . this article has jogged my memory on how to set it all up: https://www.datocms.com/blog/how-to-generate-typescript-types-from-graphql

2023・06・12

- Been playing with

react instant search hooksand I’m very impressed. They’ve thought of everything. This small bit of code does anything you want a search ui to do

import {

Configure,

Highlight,

Hits,

InstantSearch,

Pagination,

RefinementList,

SearchBox,

} from 'react-instantsearch-hooks-web';

import { history } from 'instantsearch.js/es/lib/routers';

const routing = {

router: history(),

};

const searchClient = algoliasearch(

'A5NH1O01I7',

'26c89c07aa764a90782aff6ffba5ac66'

);

function Hit({ hit }) {

return (

<article>

<h1>

<Highlight attribute="post_title" hit={hit} />

</h1>

</article>);

}

const Page = () => {

return (

<div>

<InstantSearch

searchClient={searchClient}

indexName="my_first_index"

routing={routing}>

<SearchBox

classNames={{

root: 'h-3',

}}

/>

<RefinementList attribute="categories" />

<Hits hitComponent={Hit} />

<Pagination />

</InstantSearch>

<Login />

</div>

);

};this gives a search box which populate the ui with results . we can filter by facets and there is text highlighting. to overidden classes we can use tailwind with classnames prop they provide. each class can be overridden.

2023・06・01

Reading React Essentialsfor Nextjs 13.

- Server components cant be imported into a client components. However if client components exposes a “hole” for a react node e.g with

childrenor a custom prop, a server component can be passed through here. This means something like this is possible `

<RootServerComponent>

<ClientComponent>

<ChildServerComponent />

</ClientComponent>

</RootServerComponent>- If server environment key which is not prefixed is leaked to client Next.js will covert this to an empty string

- to prevent this there is the

server-onlypackage which can be installed withnpm install server-onlyand imported in like so:

- to prevent this there is the

import 'server-only';- Context will work, but will require to probably wrap all third party providers in a

use clientcomponent eg:

'use client'; import { ThemeProvider } from 'acme-theme';

import { AuthProvider } from 'acme-auth';

export function Providers({ children }) {

return (

<ThemeProvider>

<AuthProvider>{children}</AuthProvider>

</ThemeProvider> );

}2023・05・27

- learnings on

React.use()written up as a permanent note quick-look-at-react-use-hook .

2023・05・25

-

Notes from React RFC for first class support for promises

- React client components cant be asynchronous, in React 18 if you need to run some async code you can now make use of the

usehooks. This hook is special. For one thing. It can be used conditionally, which breaks one of the rules of hooks.

- React client components cant be asynchronous, in React 18 if you need to run some async code you can now make use of the

function Note({id, shouldIncludeAuthor}) {

const note = use(fetchNote(id));

let byline = null;

if (shouldIncludeAuthor) {

const author = use(fetchNoteAuthor(note.authorId));

byline = <h2>{author.displayName}</h2>;

}

return (

<div>

<h1>{note.title}</h1>

{byline}

<section>{note.body}</section>

</div>

);

}reminder of how to handle double fetching in client side react when using useEffect

useEffect(() => {

let ignore = false;

async function startFetching() {

const json = await fetchTodos(userId);

if (!ignore) {

setTodos(json);

}

}

startFetching();

return () => {

ignore = true;

};

}, [userId]);2023・05・23

- Arc Browser a.k.a The Browser company are one to keep an eye on. They’re making cross platform app development possible with Swift. Swift is a language i feel like ive been sleeping one. It looks super ergonmoic to work with and many greater ios apps have been developed with it. It promises a UI development DX as good as React. https://www.youtube.com/watch?v=Xa_fNuaSE_IaaA

2023・05・22

- You can extend shopify dashboard to have custom fields to extend UI aspects of your store front with metafields and meta objects.

2023・05・21

revisiting 20211101 and trying to keep footers in the correct location. This works well

<body>

<main><!-- Main content --></main>

<footer><!-- Footer content --></footer>

</body>body {

min-height: 100vh;

display: flex;

flex-direction: column;

}

footer {

margin-top: auto;

}2023・05・20

rewriting jweidesigns.com with next 13 app dir today some thoughts:

- typing url params stills sucks , there has got to be better way:

type BlogSlugPageProps = {

params: { slug: string };

};

export default function BlogSlugPage({ params }: BlogSlugPageProps) {

const slug = params.slug;

return <>Slug page | {slug}</>;

}- you can’t “opt out” of global layouts. Instead its important to think about layouts in terms of layout groups. But how do you wrap the index page into a group? 🤔

turns out this the more informative doc that explains how route groups work https://nextjs.org/docs/app/building-your-application/routing/route-groups

- it works by wrapping sections of your UI code into folders named with parenthesis

2023・05・19

- Working through Total Typescript and collecting notes at total-typescript-react-notes

2023・03・05

Not kept up my notes for soo long because of work projects! 😭

- I have been working with framer motion. The big revelation I’ve had with framer is thinking about everything as

variants. When we key our animations objects into avariantsobject like so…

const container = {

hidden: { opacity: 1, scale: 0 },

visible: {

opacity: 1,

scale: 1,

transition: {

delayChildren: 0.3,

staggerChildren: 0.2

}

}

}… the magic behind framer motion is that it knows how to tween between the two animation states when you provide the keys of the variants objects into Framer component props e.g animate

- Things get really interesting with framer when you pass motion values into motion components. A motion elements is a component that looks like this

import {motion} from 'framer'

...

<motion.div> I behave like a regular div </motion.div>The styles tag/prop has superpowers now and can accept “motions values”

function Component() {

const x = useMotionValue(0)

useMotionValueEvent(x, "animationStart", () => {

console.log("animation started on x")

})

useMotionValueEvent(x, "change", (latest) => {

console.log("x changed to", latest)

})

return <motion.div style={{ x }} />

}in this example, the x value can be passed into style and the component can magically animate the x value of the component without tonnes of re-renders. Framer does its animations outside of the React re-rendering process

2023・02・01

- render children when using forwardRef in React

import * as React from 'react'

type ButtonProps = React.HTMLProps<HTMLButtonElement>

const FancyButton = React.forwardRef<HTMLButtonElement, ButtonProps>((props, ref) => (

<button type="button" ref={ref} className="FancyButton">

{props.children}

</button>

))

// You can now get a ref directly to the DOM button:

const ref = React.createRef<HTMLButtonElement>()

<FancyButton ref={ref}>Click me!</FancyButton>2023・01・29

- Garmin apps development notes:

-

The Monkey C VS code extension is key

-

if

javais not picked up in the terminal download java from here - https://www.oracle.com/uk/java/technologies/downloads/#jdk19-mac -

tutorials:

-

2023・01・24

-

git-sim is a tool to visualise your git actions. installing on a mac m1 is awkward though:

- brew install py3cairo ffmpeg - pip3 install manim - pip3 install git-sim -

then we can run something like like

git-sim merge main

2023・01・15

-

Remix pushes you to use

<form/>to make calls to the server. We’re probably more used to usingpostwith something likefetchoraxiosbut remix teaches you of vanilla form actions. When tallied up to anactionfunction to process the function server side it makes for a really tidy DX. Interesting form actions work with the browser<form>element which will cause a full page reload. If you need a more SPA feel they also provide a Remix<Form>components.

random tinkerings:

-

storybook compositions is the only way to go if you want to test different frameworks right now

2023・01・14

2023・01・13

2023・01・12

2023・01・11

-

a little gotcha with ReactQuery

onMutateis different frommutationFn! Reminder to always read the docs! -

https://tkdodo.eu/blog/mastering-mutations-in-react-query#some-callbacks-might-not-fire

2023・01・10

- machine learning models as a service https://replicate.com/

- add prisma to an nx project

yarn add -D @nx-tools/nx-prisma- add remix to an nx project

2023・01・09

-

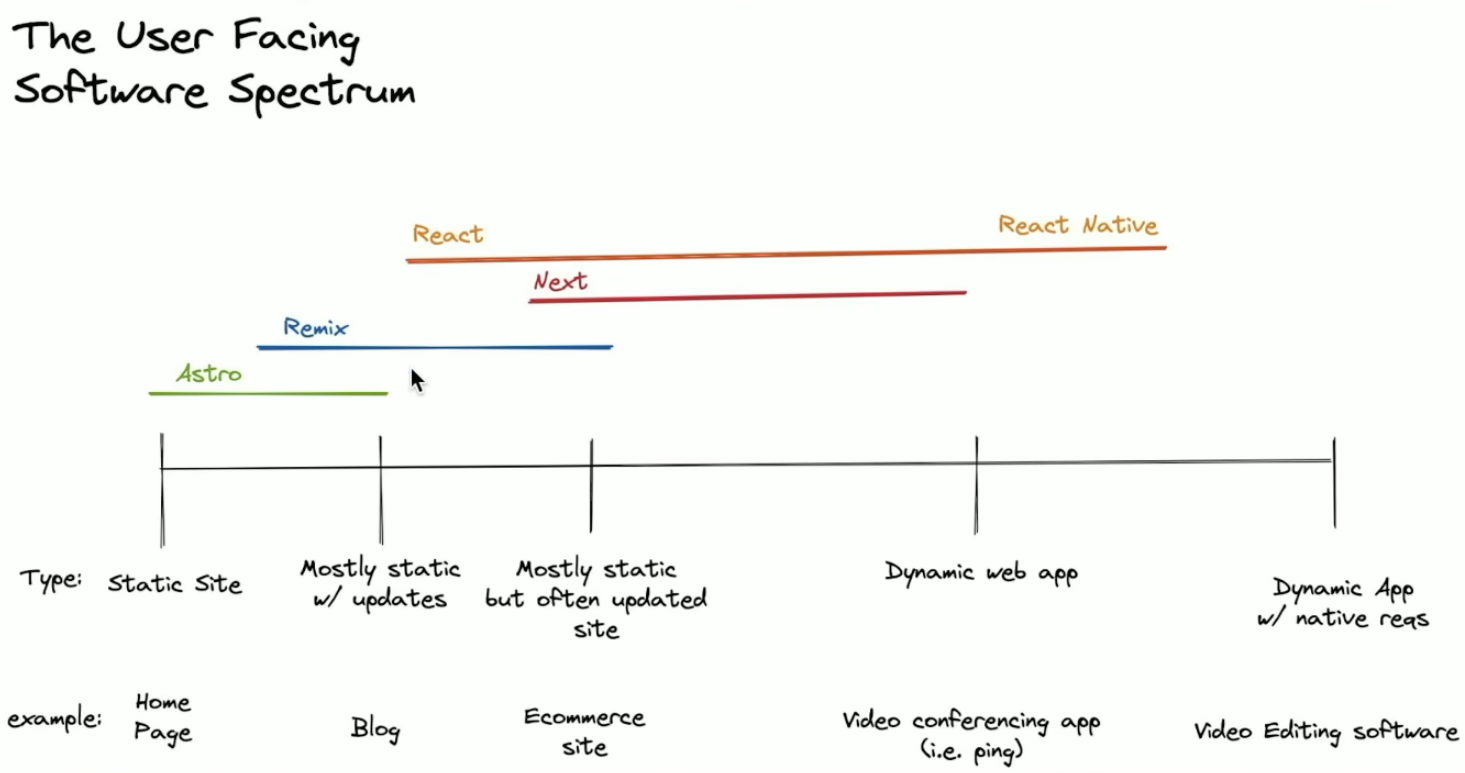

The user facing software spectrum

-

good things about Remix

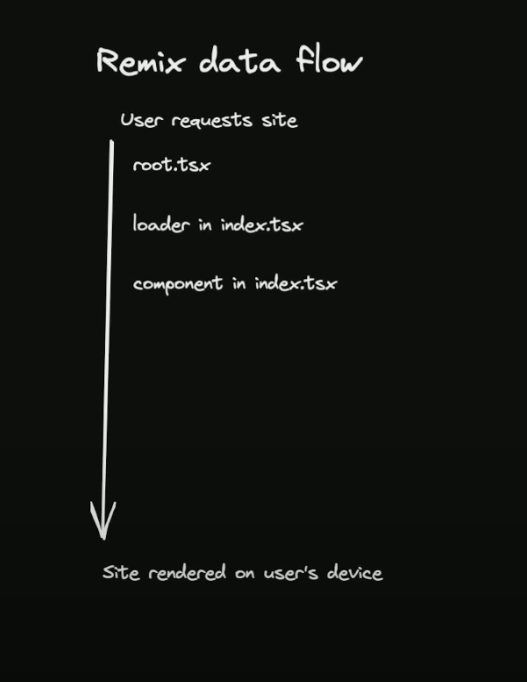

- simple data flow :

-

- simple data flow :

-

-

Load the data right in the same component. (next.js 13 can do this too now…)

-

Mutation with form actions handles server-side write functionality cleanly.

-

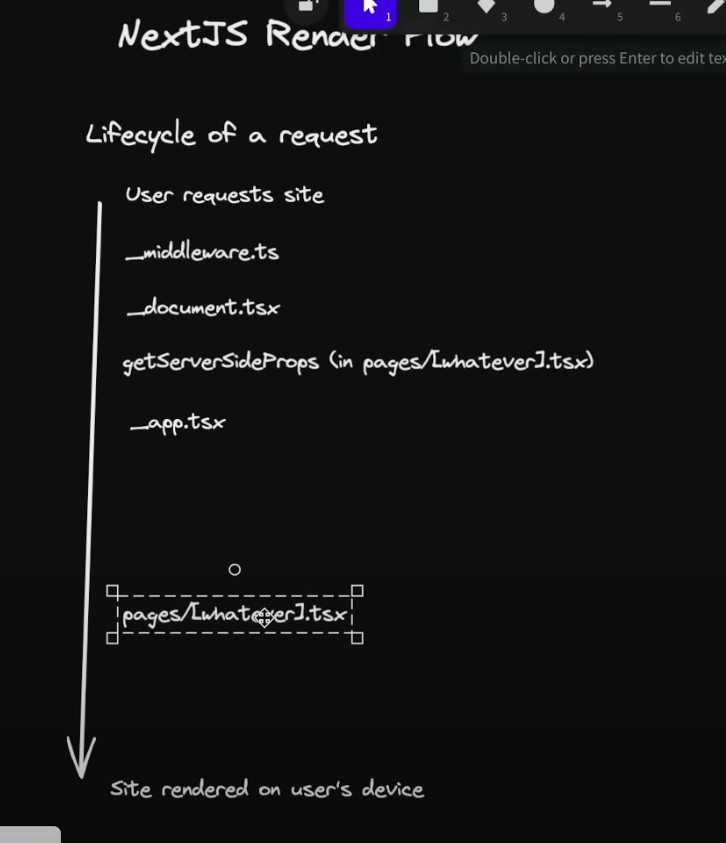

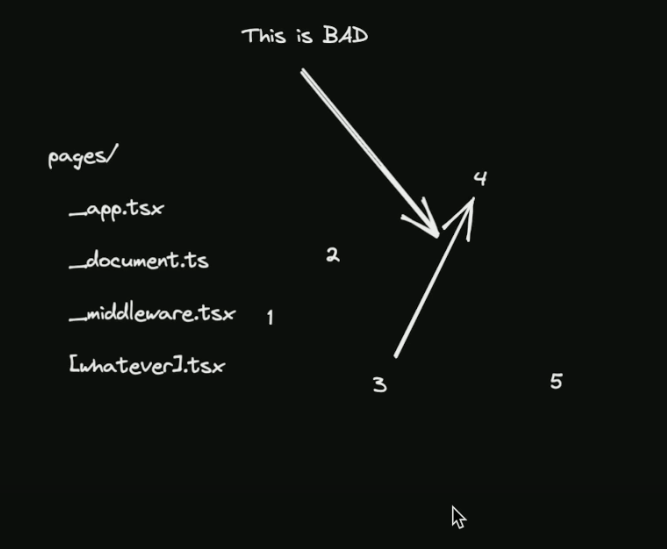

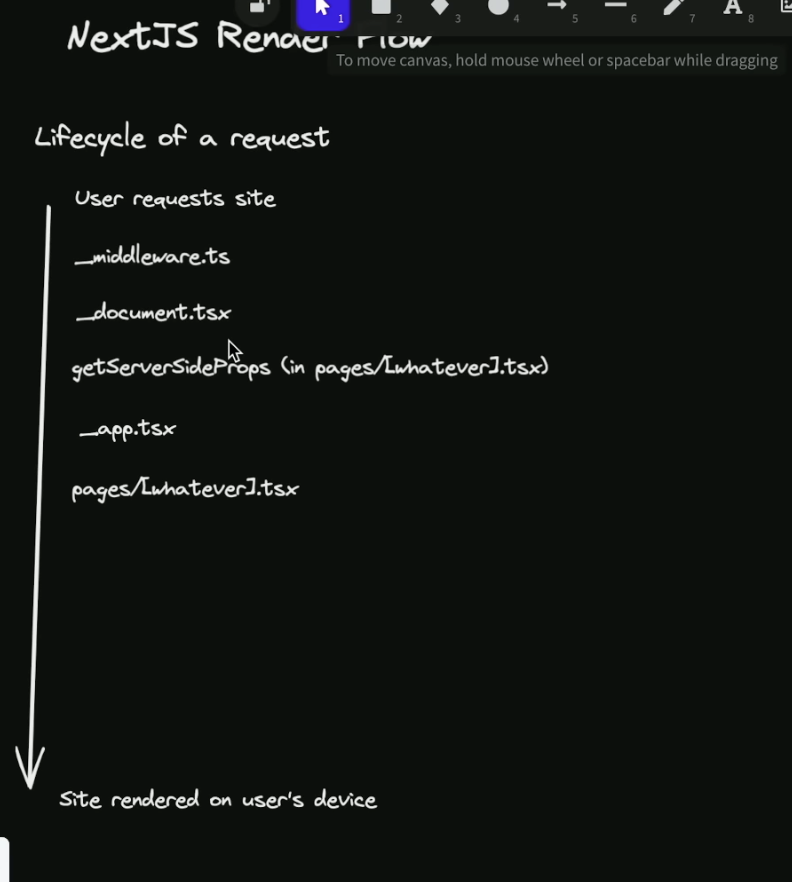

confusing things about next.js:

- The flow of data in the app is confusing

-

- Map this order to file structure and you can see how confusing it is

-

- Map this order to file structure and you can see how confusing it is

-

- The flow of data in the app is confusing

-

2023・01・08

- useful tool to package up a TS lib into different entry-point for

.cjsand.mjs- https://github.com/privatenumber/pkgroll

2023・01・07

2023・01・04

- nx gotchas :

nx: command not found error- restarting whole laptop fixes this!?

2022・12・28

2022・12・27

- Keeping learning so much CSS from this shiny card animation

when a div is absolutely positioned and you want it to fill its parent you can use inset: 0 which is the same as :

top: 0;

right: 0;

bottom: 0;

left: 0;CSS radial gradients can get complicated. At its most basic its a function that can take as many colour properties as you want and it will distribute it evenly in circular fashion.

background: radial-gradient(red, green, blue);We can add a percentage that defines each colour’s stop points. CSS blends the colours for us.

background: radial-gradient(red 10%, green 20%, blue 80%);ok, so is also a secret optional preliminary argument too… MDN documents the radial-gradient function like so:

radial-gradient( [ <ending-shape> || <size> ]? [ at <position> ]? , <color-stop-list> )

<ending-shape> - can either be circle or elipse , elipse is basically just a stretched circle to match the aspect ratio of the element it’s in.

<size> - has four options documented here

closest-sideclosest-cornerfarthest-sidefarthest-corner- default

<position> - defaults to center but supports an x and y . This is the param that we can make dynamic via CSS variable to achieve interesting effects like a spotlight effect

so our simple CSS radial gradient could now look like this:

background: radial-gradient(farthest-side circle at var(--x, 0%) var(--y, 10%), red 10%, green 20%, blue 80%);2022・12・21

-

Discovered the css

perspectiveproperty today which when used in tandem withtranform: rotate()can create some really cool 3d effectsperspectivemust be applied to to the parent of the element on which you are running the transform property on.

2022・12・18

- shibe.store went live yesterday!

2022・12・17

- Massively descoped shiba swag store from medusa.js and used vercel commerce as a UI template and swapped out the static demo for a sanity CMS backend so updates were easier.

2022・12・16

2022・12・12

- ChatGPT reminding me how to do SVG animations:

rotate a svg

To animate a group of SVG elements and make them spin indefinitely, you can use the <g> element to group them together, and then use the <animateTransform> element within the <g> element to animate the group as a whole. Here is an example that shows how to create an infinitely spinning group of SVG elements:

<svg> <g id="group"> <rect x="10" y="10" width="100" height="100" /> <circle cx="50" cy="50" r="40" /> </g> <animateTransform xlink:href="#group" attributeName="transform" type="rotate" from="0" to="360" dur="3s" repeatCount="indefinite" /> </svg>In this code, the <g> element is used to group the <rect> and <circle> elements together, and the <animateTransform> element is used to animate the group by rotating it indefinitely. The xlink:href attribute of the <animateTransform> element specifies the target of the animation (in this case, the <g> element with the id of "group"). The other animation attributes, such as attributeName, type, from, to, dur, and repeatCount, are used to control the animation in the same way as described in the previous answer.

rotate from the center of the svg

To rotate an SVG image from its center, you can use the transform-origin attribute and specify the center point of the rotation. Here is an example that shows how to rotate an SVG image from its center:

<svg>

<image xlink:href="image.svg" transform="rotate(45)" transform-origin="50% 50%"

/>

</svg>In this code, the transform-origin attribute is set to "50% 50%", which specifies the center of the rotation (i.e. the center of the image). The transform attribute is used to specify the rotation angle (in this case, 45 degrees).

Alternatively, you can use the <g> element and specify the rotation transformation and the transform-origin attribute on it, like this:

<svg>

<g

. transform="rotate(45)"

transform-origin="50% 50%">

<image xlink:href="image.svg" />

</g>

</svg>In this case, all the elements within the <g> element will be rotated by 45 degrees around the center of the group.

2022・12・02

- Carousels have so many types of UX

- Draggable

- Draggable with snapping

- Free slide

- Paginated

- Slidable with scroll wheel

The most basic carousel if probably with just a css overflow and snapping . But the moment you want “drag” functionality, this logic doesnt work and we need to work with transforming a div by “sliding” it along an x-axis. Once we do this we need to do all the hard work of figuring out the left-x position of every div slide. These become our slide points which can be used for writing our own snapping functionality and pagination

- this is a good demo that manages to keep native scroll functionality along with drag mechnanism - https://codepen.io/thenutz/pen/VwYeYEE

2022・12・01

- ChatGPT is wild, asked for

function to find closest number given an array of numbersand got :

/**

To find the closest number to a given number in an array of numbers, you can use a combination of the Math.abs() and Math.min() methods.

The Math.abs() method returns the absolute value of a number, which is the number without its sign (positive or negative). This is useful for comparing the distance between two numbers, because the distance between two numbers is always positive.

The Math.min() method returns the smallest number in a given array of numbers. This is useful for finding the smallest absolute difference between a given number and the numbers in an array.

Here is an example of a function that uses these methods to find the closest number to a given number in an array:

*/

function findClosestNumber(numbers, target) {

const distances = numbers.map(number => Math.abs(number - target));

const closestIndex = distances.indexOf(Math.min(...distances));

return numbers[closestIndex];

}

const numbers = [1, 5, 10, 15, 20];

const target = 12;

findClosestNumber(numbers, target)

// nearest will be 10

2022・11・30

- Implementing exit animations without framer motion feels rough! but this helped a lot:

2022・11・29

- To allow for full bleed elements in the CSS grid, we can’t use max-width on inner elements because we can’t “break out”. Instead, we can define the outer gutters as auto-growing columns. but what if we want to define the inner container as a specific size?

.layout {

display: grid;

grid-template-columns:

calc( 50vw - 500px )

repeat( 8, 1fr )

calc( 50vw - 500px);